Source: IBM Cost of a Data Breach Report, 2025; CrowdStrike Charlotte AI, 2024; Palo Alto Networks Unit 42, 2024

Signature-based intrusion detection systems were built for a world of known threats. That world no longer exists. IBM’s 2025 Cost of a Data Breach Report found that organisations using AI-driven security tools detected breaches 108 days faster than those relying on traditional methods — and saved an average of $2.22 million per incident. As adversaries weaponise generative AI to craft polymorphic malware and zero-day exploits at industrial scale, the enterprise security industry is undergoing its most significant architectural shift in two decades: the migration from pattern-matching to machine-learning-based threat detection.

The signature problem

Traditional intrusion detection and prevention systems — IDS and IPS — operate on a conceptually simple model. Security researchers identify a malicious payload, extract a unique byte pattern, and distribute that signature to every sensor on the network. When traffic matches the pattern, the system fires an alert. The approach works reliably against known threats. It fails catastrophically against unknown ones.

The mathematics of the problem have turned decisive. AV-TEST, the independent security research institute, registers over 450,000 new malware samples and potentially unwanted applications every day. No human team can write signatures fast enough to keep pace. Worse, modern attack techniques — fileless malware, living-off-the-land binaries, encrypted command-and-control channels — leave no static signature to match against. Palo Alto Networks’ Unit 42 threat research division reported that 40% of the incidents it investigated in 2024 involved techniques that would evade conventional signature-based detection entirely.

The result is a detection gap that widens with every passing quarter. Enterprises that depend solely on legacy IDS/IPS are not merely accepting known risk — they are accumulating unknown risk at an accelerating rate.

How machine learning changes the equation

AI-driven threat detection inverts the traditional model. Instead of asking “does this traffic match a known bad pattern?”, machine-learning systems ask “does this traffic deviate from learned normal behaviour?” The distinction is profound. A signature-based system can only catch what it has seen before. A behavioural model can flag what it has never seen — precisely the category that matters most.

The approach relies on several complementary techniques. Supervised models trained on labelled datasets of malicious and benign traffic provide baseline classification. Unsupervised anomaly detection identifies statistical outliers in network flow data — unusual data volumes, atypical connection patterns, or communications with previously unseen endpoints. Deep-learning architectures, particularly recurrent neural networks and transformers, analyse sequences of network events to detect multi-stage attack campaigns that unfold over days or weeks, where no single event would trigger a traditional alert.

CrowdStrike’s Charlotte AI, introduced in 2024, exemplifies the operational shift. The platform correlates endpoint telemetry, identity data, and network traffic using large language models to generate natural-language threat assessments in real time. CrowdStrike reported that Charlotte AI reduced mean investigation time from 60 minutes to under 5 minutes for tier-one analysts — a 92% reduction that directly addresses the chronic staffing shortage in security operations centres. Darktrace, a British cybersecurity firm, takes an alternative approach with its Enterprise Immune System, which builds bespoke behavioural models for each customer network without relying on pre-existing threat intelligence. The company disclosed that its AI autonomously interrupted over 10 million threats per year across its customer base by 2025.

The false-positive trade-off

AI-driven detection is not without costs. The same sensitivity that catches novel threats also generates false positives — benign activities flagged as suspicious because they deviate from learned baselines. A developer downloading an unusually large dataset for a legitimate project, a marketing team launching a new SaaS tool without informing IT, or a server running a scheduled batch job at an atypical hour can all trigger behavioural alerts.

The industry has converged on a layered mitigation strategy. Initial anomaly scores are refined through ensemble models that cross-reference multiple data sources. Adaptive feedback loops incorporate analyst decisions — when a human marks an alert as a false positive, the model retrains. And increasingly, security orchestration platforms use reinforcement learning to tune alert thresholds dynamically, balancing detection sensitivity against analyst fatigue.

Microsoft’s Security Copilot, launched in 2024 and integrated across its Defender product suite, illustrates this feedback architecture. The system uses GPT-4-class models to contextualise alerts, correlate indicators of compromise across email, endpoint, and cloud workloads, and draft incident reports. Microsoft reported that Security Copilot increased the accuracy of junior analysts by 44% in controlled trials — a metric that matters enormously given that the global cybersecurity workforce gap reached 4.8 million unfilled positions in 2024, according to ISC2’s annual workforce study.

Enterprise adoption patterns

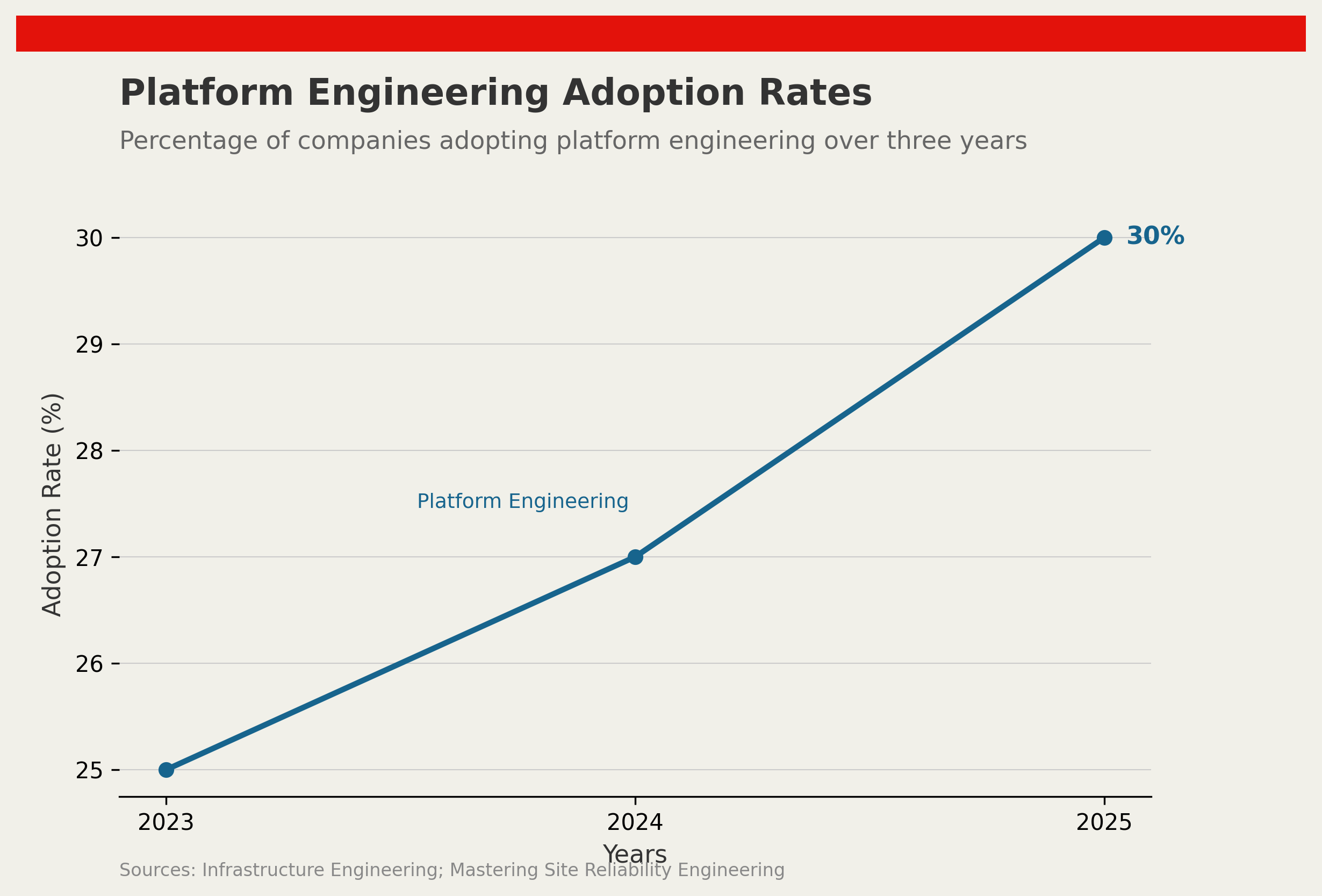

Adoption is accelerating but uneven. Gartner projected that by the end of 2026, 40% of enterprises will have deployed AI-augmented security operations, up from fewer than 5% in 2021. The largest deployments cluster in financial services, healthcare, and critical infrastructure — sectors where regulatory pressure and breach costs create strong economic incentives.

The deployment models vary. Some organisations embed AI detection within existing security information and event management (SIEM) platforms — Splunk, now owned by Cisco, and Microsoft Sentinel both offer native machine-learning modules. Others deploy dedicated network detection and response (NDR) solutions from specialists like Darktrace, Vectra AI, or ExtraHop. A third cohort builds bespoke models on internal data, an approach favoured by large banks with in-house data-science teams and proprietary threat-intelligence feeds.

The economics favour migration. IBM’s 2025 report calculated the average cost of a data breach at $4.88 million globally — the highest figure ever recorded. Organisations that had fully deployed AI in their security workflows reported average breach costs of $3.84 million, a $1.04 million saving. When detection speed, containment efficiency, and reduced analyst overhead are factored in, the return on investment for AI-driven security tools has become difficult to dispute.

The adversarial horizon

The arms race is far from settled. Attackers are already using generative AI to produce convincing phishing emails, deepfake voice messages for social engineering, and polymorphic malware that mutates its code with every execution. The same transformer architectures that power defensive tools are available to offensive actors — a symmetry that the security industry calls the “dual-use dilemma.”

The implications extend well beyond network perimeters. As organisations instrument more of their operations — from software supply chains to cloud-native microservices — the attack surface grows faster than human teams can monitor. This is the same scaling challenge that confronts quality engineering in software development: the volume and velocity of signals have outstripped manual inspection capacity, making AI augmentation not a luxury but an operational necessity.

The enterprises that will fare best are those treating AI-driven threat detection not as a product purchase but as a capability investment — retraining analysts, building feedback loops, and accepting that the models will be imperfect but improvable. In cybersecurity, as in most domains where AI is transforming operations, the competitive advantage belongs not to those with the best algorithms but to those with the best data, the fastest learning cycles, and the institutional willingness to trust — and verify — machine judgement.

References

- IBM Security, “Cost of a Data Breach Report 2025”, IBM, 2025

- AV-TEST Institute, “Malware Statistics & Trends Report”, AV-TEST, 2025

- Palo Alto Networks, “Unit 42 Incident Response Report 2024”, Palo Alto Networks, 2024

- ISC2, “Cybersecurity Workforce Study 2024”, ISC2, 2024

- Gartner, “Top Strategic Technology Trends 2026”, Gartner, 2025

Discussion